KAOHSIUNG, TAIWAN — In a move that underscores the physical infrastructure demands of the artificial intelligence revolution, ASE Technology Holding Co., Ltd. (NYSE:ASX) has announced a staggering $7 billion capital expenditure plan for 2026. The record-breaking investment, representing a 27% increase over its 2025 budget, marks a strategic pivot for the world’s largest outsourced semiconductor assembly and test (OSAT) provider as it positions itself as the "capacity gatekeeper" for the next generation of AI silicon.

The announcement comes at a critical juncture for the industry. As leading-edge chip design hits the physical limits of traditional monolith fabrication, the focus has shifted toward advanced packaging—the process of combining multiple smaller "chiplets" into a single, high-performance unit. By committing $7 billion to expand its facilities in Taiwan and Malaysia, ASE is betting that the future of AI lies not just in how transistors are made, but in how they are interconnected and cooled.

The Technical Frontier: Beyond Moore’s Law with VIPack and FOCoS

At the heart of ASE’s 2026 expansion is a suite of proprietary technologies designed to handle the "explosive" complexity of AI processors. The investment targets the mass-scale rollout of the VIPack™ platform, which utilizes Fan-Out Chip-on-Substrate (FOCoS) and "Bridge" technologies. Unlike previous generations of packaging that relied on simple wire bonding, FOCoS-Bridge allows for silicon bridges to connect chiplets with a density nearly 200 times higher than traditional organic packages. This is essential for the low-latency communication required between high-bandwidth memory (HBM) and GPU cores found in the latest accelerators from NVIDIA (NASDAQ:NVDA) and AMD (NASDAQ:AMD).

Furthermore, a significant portion of the $7 billion is dedicated to addressing the "thermal bottleneck" of AI hardware. As modern AI server racks now consume upwards of 120kW, ASE’s upcoming K28 Smart Factory in Kaohsiung is being engineered to integrate liquid cooling and microfluidic channels directly into the package. Technical experts from firms like TechInsights have noted that this shift toward "thermal-aware packaging" is a radical departure from previous air-cooled standards. Additionally, ASE is scaling its "PowerSiP" technology, which integrates power delivery circuits within the package to reduce energy loss by up to 50%—a critical requirement as chips move toward sub-1nm equivalent performance levels.

Market Dynamics: Pricing Power and the "Second Supply Chain"

The financial scale of this CapEx plan has sent ripples through the semiconductor market, with analysts from Morgan Stanley and Goldman Sachs identifying a structural shift in the industry's power balance. For the first time in decades, OSAT providers like ASE are wielding significant pricing power, with reports indicating ASE will raise backend packaging prices by 5% to 20% in 2026. This price hike is driven by a chronic supply-demand gap, where even the massive internal capacity of Taiwan Semiconductor Manufacturing Co. (NYSE:TSM) cannot meet the global demand for CoWoS (Chip-on-Wafer-on-Substrate) packaging.

By tripling its "CoWoS-equivalent" capacity to 25,000 wafers per month, ASE is effectively becoming the indispensable "second supply chain" for the world's tech giants. While competitors like Amkor Technology (NASDAQ:AMKR) and Intel (NASDAQ:INTC) are also expanding their advanced packaging footprints, ASE’s 44.6% market share and its "dual-engine" growth model—leveraging both its Taiwan hubs and a massive 3.4 million square foot expansion in Penang, Malaysia—provide a strategic advantage. This geographic diversification is particularly attractive to hyperscalers like Amazon and Google, who are increasingly seeking supply chain resilience amid geopolitical tensions in the Taiwan Strait.

The Chiplet Revolution: Redefining the Broader AI Landscape

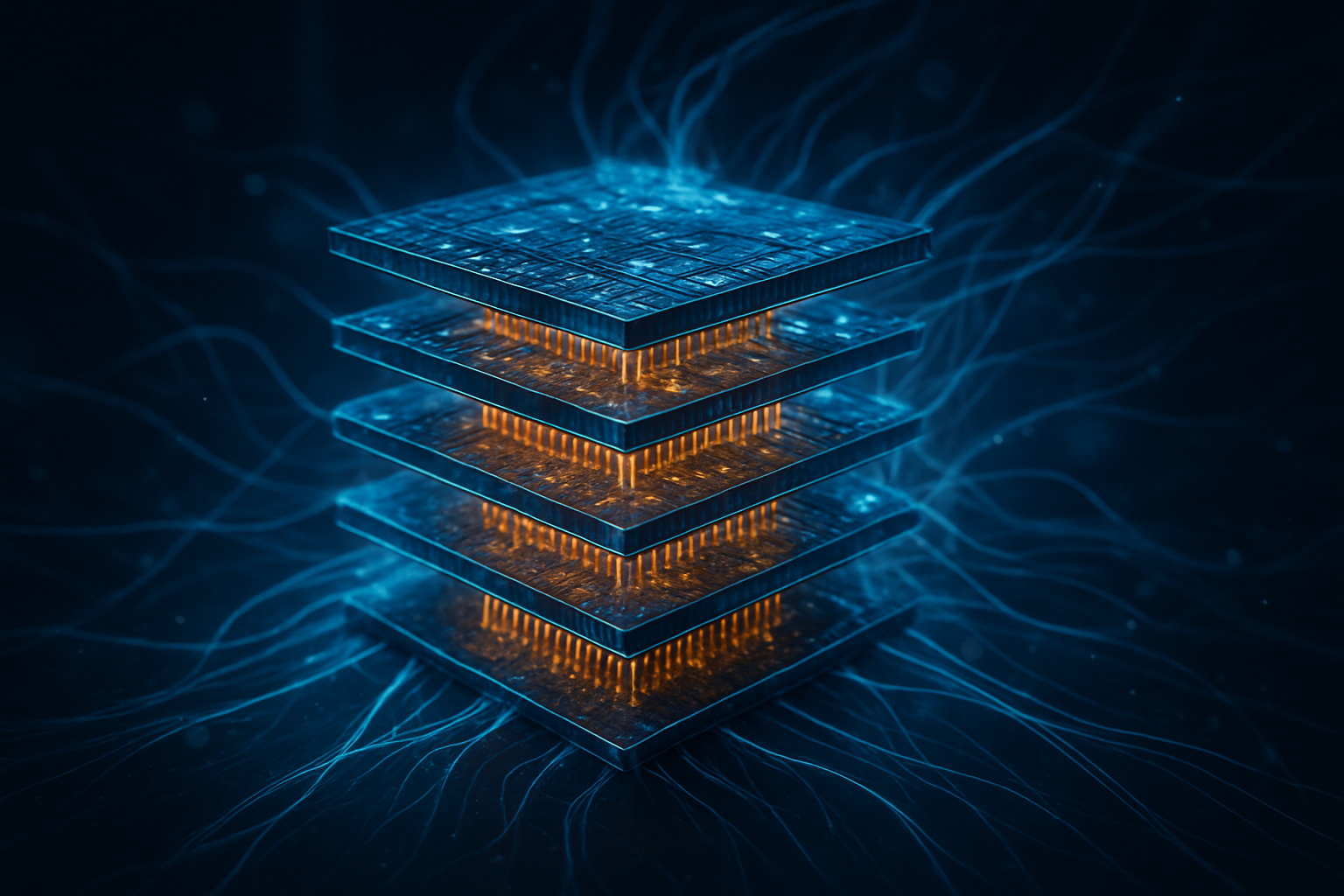

ASE’s massive investment serves as the loudest signal yet that the "Chiplet Era" has arrived. For decades, Moore’s Law was driven by shrinking transistors on a single piece of silicon. Today, that progress has slowed and become prohibitively expensive. The industry has entered what experts call the "More than Moore" phase, where the integration of heterogeneous components—CPUs, GPUs, and specialized AI NPU chiplets—becomes the primary driver of performance gains. ASE’s $7 billion bet confirms that advanced packaging is no longer a "backend" afterthought but the very frontier of semiconductor innovation.

This development also highlights the shifting landscape of global AI sovereignty. By expanding its Malaysian facilities alongside its Taiwan strongholds, ASE is facilitating a globalized manufacturing model that can survive localized disruptions. However, this transition is not without concerns. The reliance on advanced packaging creates new vulnerabilities, particularly regarding the supply of specialized ABF substrates and the rising cost of the high-purity metals required for 3D stacking. Much like the wafer shortages of 2021, the industry now faces a potential "packaging crunch" that could gate the speed of AI deployment for years to come.

Looking Ahead: Co-Packaged Optics and the 2027 Horizon

The 2026 expansion is likely only the beginning of a decade-long infrastructure cycle. Looking toward 2027 and 2028, ASE has already begun teasing the integration of Co-Packaged Optics (CPO). This technology moves optical engines directly onto the package substrate, replacing copper wires with light-based communication to further reduce the massive power consumption of AI data centers. Experts predict that as AI models continue to scale in parameter count, CPO will become a mandatory requirement for the networking fabric that connects thousands of GPUs.

Near-term challenges remain, particularly in achieving high yields for vertically stacked 3D architectures. While 2.5D packaging (placing chips side-by-side) is maturing, true 3D stacking (placing chips on top of each other) remains a high-risk, high-reward endeavor due to the extreme heat generated in the center of the stack. ASE’s investment in "Smart Factories" and AI-driven quality control is intended to mitigate these risks, but the learning curve for these next-generation facilities will be steep as they begin trial production in late 2026.

Conclusion: The Physical Foundation of Intelligence

ASE Technology’s record $7 billion CapEx plan for 2026 represents a watershed moment in the history of artificial intelligence. It marks the point where the industry’s greatest bottleneck shifted from the design of AI algorithms to the physical assembly of the hardware that runs them. By doubling its leading-edge packaging revenue and aggressively expanding its global footprint, ASE is cementing its role as the essential partner for every major player in the AI ecosystem.

In the coming weeks and months, the industry will be watching for the first equipment move-ins at the K28 facility in Kaohsiung and further details on the "FOPLP" (Fan-Out Panel Level Packaging) lines designed to bring economies of scale to massive AI chips. As 2026 unfolds, ASE’s ability to execute this $7 billion expansion will largely determine the pace at which the next generation of AI breakthroughs can be delivered to the world.

This content is intended for informational purposes only and represents analysis of current AI developments.

TokenRing AI delivers enterprise-grade solutions for multi-agent AI workflow orchestration, AI-powered development tools, and seamless remote collaboration platforms.

For more information, visit https://www.tokenring.ai/.