At the 2026 Consumer Electronics Show (CES), NVIDIA (NASDAQ: NVDA) redefined the boundaries of artificial intelligence by unveiling the Nemotron 3 family of open models. Moving beyond the text-and-image paradigms of previous years, the new suite is specifically engineered for "agentic AI"—autonomous systems capable of multi-step reasoning, tool use, and complex decision-making. This launch marks a pivotal shift for the tech giant as it transitions from a provider of general-purpose large language models (LLMs) to the architect of a comprehensive "Physical AI" ecosystem.

The announcement signals Nvidia's ambition to move AI off the screen and into the physical world. By integrating the Nemotron 3 reasoning engine with its newly announced Cosmos world foundation models and Rubin hardware platform, Nvidia is providing the foundational software and hardware stack for the next generation of humanoid robots, autonomous vehicles, and industrial automation systems. The immediate significance is clear: Nvidia is no longer just selling the "shovels" for the AI gold rush; it is now providing the brains and the bodies for the autonomous workforce of the future.

Technical Mastery: The Hybrid Mamba-Transformer Architecture

The Nemotron 3 family represents a significant technical departure from the industry-standard Transformer-only models. Built on a sophisticated Hybrid Mamba-Transformer Mixture-of-Experts (MoE) architecture, these models combine the high-reasoning accuracy of Transformers with the low-latency and long-context efficiency of Mamba-2. The family is tiered into three primary sizes: the 30B Nemotron 3 Nano for local edge devices, the 100B Nemotron 3 Super for enterprise automation, and the massive 500B Nemotron 3 Ultra, which sets new benchmarks for complex scientific planning and coding.

One of the most striking technical features is the massive 1-million-token context window, allowing agents to ingest and "remember" entire technical manuals or weeks of operational data in a single pass. Furthermore, Nvidia has introduced granular "Reasoning Controls," including a "Thinking Budget" that allows developers to toggle between high-speed responses and deep-reasoning modes. This flexibility is essential for agentic workflows where a robot might need to react instantly to a physical hazard but spend several seconds planning a complex assembly task. Initial reactions from the AI research community have been overwhelmingly positive, with experts noting that the 4x throughput increase over Nemotron 2, when paired with the new Rubin GPUs, effectively solves the latency bottleneck that previously plagued real-time agentic AI.

Strategic Dominance: Reshaping the Competitive Landscape

The release of Nemotron 3 as an open-model family places significant pressure on proprietary AI labs like OpenAI and Google (NASDAQ: GOOGL). By offering state-of-the-art (SOTA) reasoning capabilities that are optimized to run with maximum efficiency on Nvidia hardware, the company is incentivizing developers to build within its ecosystem rather than relying on closed APIs. This strategy directly benefits enterprise giants like Siemens (OTC: SIEGY), which has already announced plans to integrate Nemotron 3 into its industrial design software to create AI agents that assist in complex semiconductor and PCB layout.

For startups and smaller AI labs, the availability of these high-performance open models lowers the barrier to entry for developing sophisticated agents. However, the true competitive advantage lies in Nvidia's vertical integration. Because Nemotron 3 is specifically tuned for the Rubin platform—utilizing the new Vera CPU and BlueField-4 DPU for optimized data movement—competitors who lack integrated hardware stacks may find it difficult to match the performance-to-cost ratio Nvidia is now offering. This positioning turns Nvidia into a "one-stop shop" for Physical AI, potentially disrupting the market for third-party orchestration layers and middleware.

The Physical AI Vision: Bridging the Digital-Physical Divide

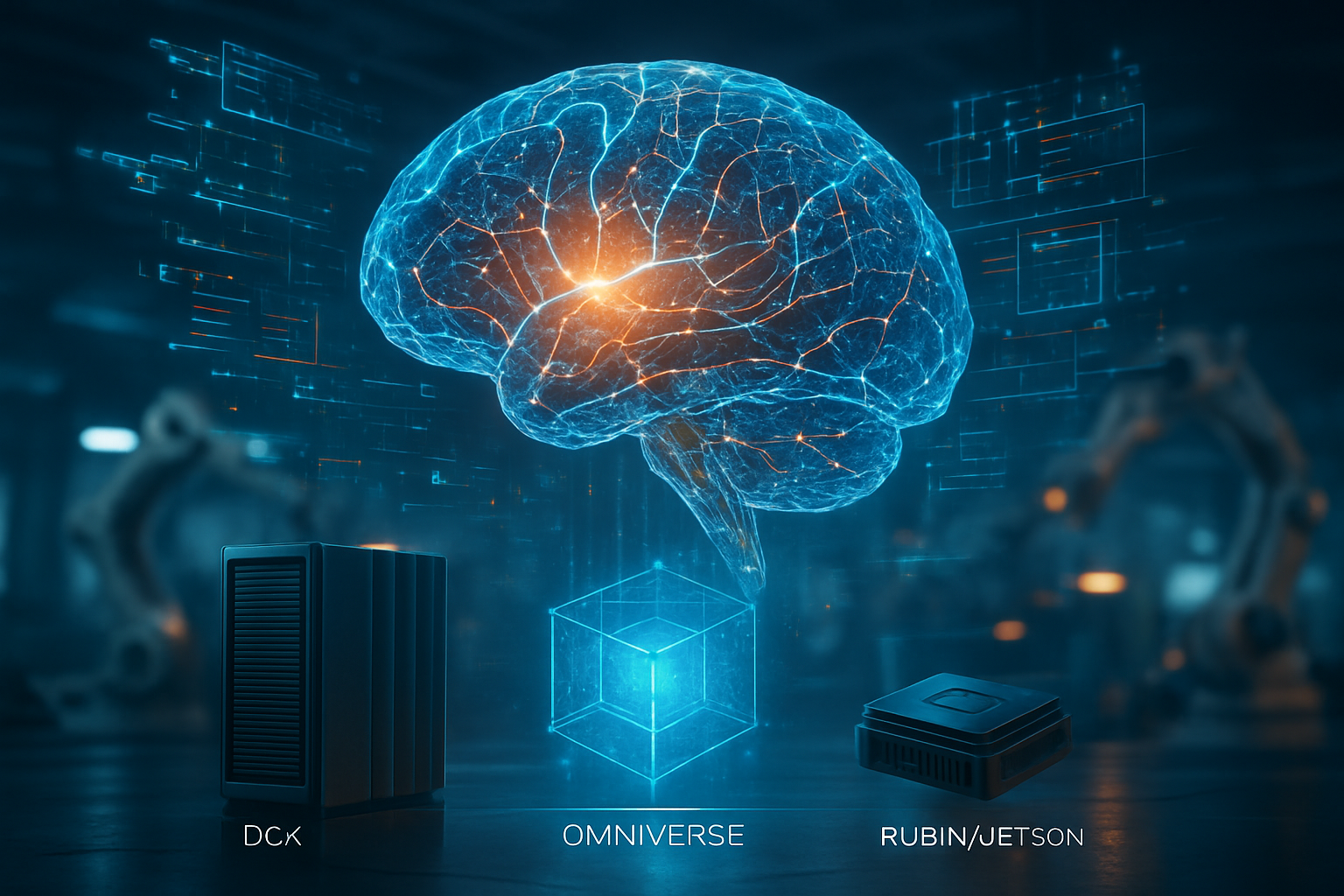

The "Physical AI" strategy announced at CES 2026 is perhaps the most ambitious roadmap in Nvidia's history. It is built on a "three-computer" architecture: the DGX for training, Omniverse for simulation, and Jetson or DRIVE for real-time operation. Within this framework, Nemotron 3 serves as the "logic" or the brain, while the new NVIDIA Cosmos models act as the "intuition." Cosmos models are world foundation models designed to understand physics—predicting how objects fall, slide, or interact—which allows robots to navigate the real world with human-like common sense.

This integration is a milestone in the broader AI landscape, moving beyond the "stochastic parrot" critique of early LLMs. By grounding reasoning in physical reality, Nvidia is addressing one of the most significant hurdles in robotics: the "sim-to-real" gap. Unlike previous breakthroughs that focused on digital intelligence, such as GPT-4, the combination of Nemotron and Cosmos allows for "Physical Common Sense," where an AI doesn't just know how to describe a hammer but understands the weight, trajectory, and force required to use one. This shift places Nvidia at the forefront of the "General Purpose Robotics" trend that many believe will define the late 2020s.

The Road Ahead: Humanoids and Autonomous Realities

Looking toward the near-term future, the most immediate applications of the Nemotron-Cosmos stack will be seen in humanoid robotics and autonomous transport. Nvidia’s Isaac GR00T N1.6—a Vision-Language-Action (VLA) model—is already utilizing Nemotron 3 to enable robots to perform bimanual manipulation and navigate dynamic, crowded workspaces. In the automotive sector, the new Alpamayo 1 model, developed in partnership with Mercedes-Benz (OTC: MBGYY), uses Nemotron's chain-of-thought reasoning to allow self-driving cars to explain their decisions to passengers, such as slowing down for a distracted pedestrian.

Despite the excitement, significant challenges remain, particularly regarding the safety and reliability of autonomous agents in unconstrained environments. Experts predict that the next two years will be focused on "alignment for action," ensuring that agentic AI follows strict safety protocols when interacting with humans. As these models become more autonomous, the industry will likely see a surge in demand for "Inference Context Memory Storage" and other hardware-level solutions to manage the massive data flows required by multi-agent systems.

A New Chapter in the AI Revolution

Nvidia’s announcements at CES 2026 represent a definitive closing of the chapter on "Chatbot AI" and the opening of the era of "Agentic Physical AI." The Nemotron 3 family provides the necessary reasoning depth, while the Cosmos models provide the physical grounding, creating a holistic system that can finally interact with the world in a meaningful way. This development is likely to be remembered as the moment when AI moved from being a tool we talk to, to a partner that works alongside us.

As we move into the coming months, the industry will be watching closely to see how quickly these models are adopted by the robotics and automotive sectors. With the Rubin platform entering full production and partnerships with global leaders already in place, Nvidia has set a high bar for the rest of the tech industry. The long-term impact of this development could be a fundamental shift in global productivity, as autonomous agents begin to take on roles in manufacturing, logistics, and even domestic care that were once thought to be decades away.

This content is intended for informational purposes only and represents analysis of current AI developments.

TokenRing AI delivers enterprise-grade solutions for multi-agent AI workflow orchestration, AI-powered development tools, and seamless remote collaboration platforms.

For more information, visit https://www.tokenring.ai/.