As of February 2, 2026, NASA’s ambitious Dragonfly mission has officially transitioned into Phase D, marking the commencement of the "Iron Bird" integration and testing phase at the Johns Hopkins Applied Physics Laboratory (APL). This pivotal milestone signifies that the mission has moved from the drawing board to the physical assembly of flight hardware. Dragonfly, a nuclear-powered rotorcraft destined for Saturn’s moon Titan, represents the most significant leap in autonomous deep-space exploration since the landing of the Perseverance rover. With a scheduled launch in July 2028 aboard a SpaceX Falcon Heavy, the mission is now racing to finalize the sophisticated AI that will serve as the craft's "brain" during its multi-year residence on the alien moon.

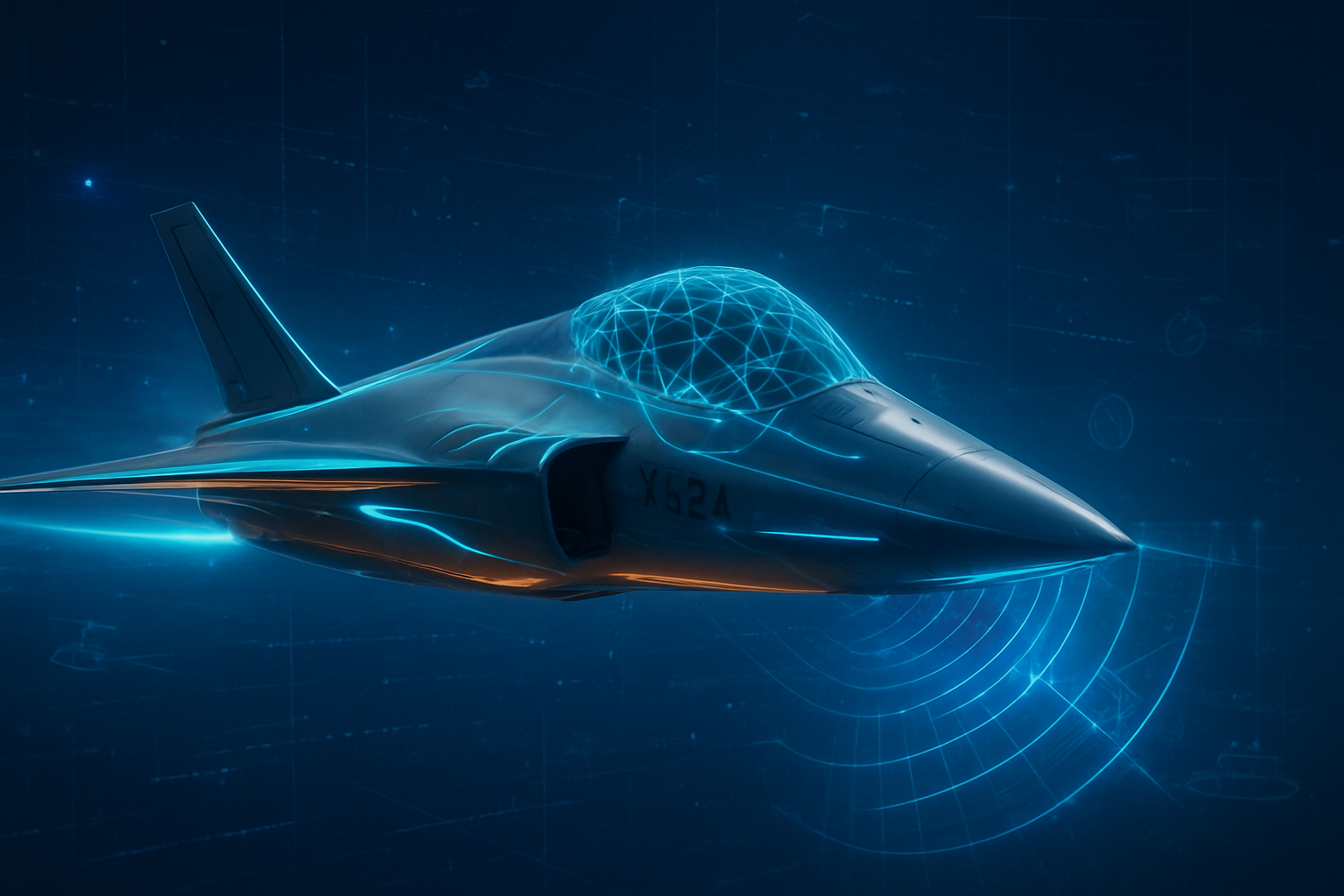

The immediate significance of this development lies in the sheer complexity of the environment Dragonfly must conquer. Titan is located approximately 1.5 billion kilometers from Earth, creating a one-way communication delay of 70 to 90 minutes. This lag renders traditional "joystick" piloting impossible. Unlike the Mars rovers, which crawl at a measured pace and often wait for ground-station approval before moving, Dragonfly is designed for rapid, high-speed aerial sorties across Titan’s dunes and craters. To survive, it must possess a level of hierarchical autonomy never before seen in a planetary explorer, capable of making split-second decisions about flight stability, hazard avoidance, and even scientific prioritization without human intervention.

Technical Foundations: From Visual Odometry to Neuromorphic Acceleration

At the heart of Dragonfly’s navigation suite is an advanced Terrain Relative Navigation (TRN) system, which has evolved significantly from the versions used by Perseverance. In the thick, hazy atmosphere of Titan—which is four times denser than Earth's—Dragonfly’s AI utilizes U-Net-like deep learning architectures for real-time Hazard Detection and Avoidance (HDA). During its 105-minute descent and subsequent "hops" of up to 8 kilometers, the craft’s AI processes monocular grayscale imagery and lidar data to infer terrain slope and roughness. This allows the rotorcraft to identify safe landing zones on-the-fly, a critical capability given that much of Titan remains unmapped at the high resolutions required for landing.

A major technical breakthrough finalized in late 2025 is the integration of the SAKURA-II AI co-processor. Moving away from traditional Field-Programmable Gate Arrays (FPGAs), these radiation-hardened AI accelerators provide the massive computational throughput required for real-time computer vision while maintaining an incredibly lean energy budget. This hardware enables "Science Autonomy," a secondary AI layer developed at NASA Goddard. This system acts as an onboard curator, autonomously analyzing data from the Dragonfly Mass Spectrometer (DraMS) to identify biologically relevant chemical signatures. By prioritizing the most interesting samples for transmission, the AI ensures that mission-critical discoveries are downlinked first, maximizing the value of the mission’s limited bandwidth.

This approach differs fundamentally from previous technology by shifting the "decision-making" burden from Earth to the edge of the solar system. Previous missions relied on "thinking-while-driving" for obstacle avoidance; Dragonfly implements "thinking-while-flying." The AI must manage not only navigation but also the thermal dynamics of its Multi-Mission Radioisotope Thermoelectric Generator (MMRTG). In Titan’s cryogenic environment, the AI autonomously adjusts internal heat distribution to prevent the electronics from freezing or overheating, balancing the craft's thermal state with its flight power requirements in real-time.

The Industrial Ripple Effect: Lockheed Martin and the Space AI Market

The successful transition to hardware integration has sent a clear signal to the aerospace and defense sectors. Lockheed Martin (NYSE: LMT), the prime contractor for the cruise stage and aeroshell, stands as a primary beneficiary of the Dragonfly program. The mission’s rigorous requirements for autonomous thermal management and entry, descent, and landing (EDL) systems have allowed Lockheed Martin to solidify its lead in high-stakes autonomous aerospace engineering. Industry analysts suggest that the "flight-proven" AI frameworks developed for Dragonfly will likely be adapted for future defense applications, particularly in long-endurance autonomous drones operating in contested or signal-denied environments on Earth.

Beyond traditional defense giants, the mission highlights a growing synergy between specialized AI labs and space agencies. While the core flight software was developed by APL and NASA, the mission has utilized ground-based assists from large language models and generative AI for mission planning simulations. In late 2025, NASA demonstrated the use of advanced LLMs to process orbital imagery and generate valid navigation waypoints, a technique now being integrated into Dragonfly’s ground-support systems. This trend indicates a disruption in how mission architectures are designed, moving toward a model where AI agents handle the preliminary "drudge work" of trajectory planning and anomaly detection, allowing human scientists to focus on high-level strategy.

The strategic advantage gained by companies involved in Dragonfly’s AI cannot be overstated. As the "Space AI" market expands, the ability to demonstrate hardware and software that can survive the radiation of deep space and the cryogenic temperatures of the outer solar system becomes a premium credential. This positioning is critical as private entities like SpaceX and Blue Origin look toward long-term goals of lunar and Martian colonization, where autonomous resource management and navigation will be the baseline requirements for success.

A New Era of Autonomous Deep-Space Exploration

The Dragonfly mission fits into a broader trend in the AI landscape: the transition from centralized "cloud" AI to hyper-efficient "edge" AI. In the context of deep space, there is no cloud; the edge is everything. Dragonfly is a testament to how far autonomous systems have come since the simple programmed sequences of the Voyager era. It represents a paradigm shift where the spacecraft is no longer just a remote-controlled sensor but a robotic field researcher. This shift toward "Science Autonomy" is a milestone comparable to the first successful autonomous landing on Mars, as it marks the first time AI will be given the authority to decide which scientific data is "important" enough to send home.

However, this level of autonomy brings potential concerns, primarily regarding the "black box" nature of deep learning in mission-critical environments. If the HDA system misidentifies a methane pool as a solid landing site, there is no way for Earth to intervene. To mitigate this, NASA has implemented "Hierarchical Autonomy," where human controllers send high-level waypoint commands, but the AI holds final veto power based on its local sensor data. This collaborative model between human and machine is becoming the gold standard for AI deployment in high-stakes, unpredictable environments.

Comparisons to past milestones are frequent in the aerospace community. If the Mars rovers were the equivalent of early self-driving cars, Dragonfly is the equivalent of a fully autonomous, long-range drone operating in a blizzard. Its success would prove that AI can handle "2 hours of terror"—the extended, complex descent through Titan’s thick atmosphere—which is far more operationally demanding than the "7 minutes of terror" associated with Mars landings.

Future Horizons: From Titan to the Icy Moons

Looking ahead, the technologies being refined for Dragonfly in early 2026 are expected to pave the way for even more ambitious missions. Experts predict that the autonomous flight algorithms and SAKURA-II hardware will be the blueprint for future "Cryobot" missions to Europa or Enceladus, where robots must navigate through thick ice shells to reach subsurface oceans. In these environments, communication will be even more restricted, making Dragonfly’s level of science autonomy a mandatory requirement rather than a luxury.

In the near term, we can expect to see the "Iron Bird" tests at APL yield a wealth of data on how Dragonfly’s subsystems interact. Any anomalies discovered during this 2026 testing phase will be critical for refining the final flight software. Challenges remain, particularly in the realm of "long-tail" scenarios—unpredictable weather events on Titan like methane rain or shifting sand dunes—that the AI must be robust enough to handle. The next 24 months will focus heavily on "adversarial simulation," where the AI is subjected to thousands of simulated Titan environments to ensure it can recover from any conceivable flight error.

Summary and Final Thoughts

NASA’s Dragonfly mission represents a watershed moment in the history of artificial intelligence and space exploration. By integrating advanced deep learning, neuromorphic co-processors, and autonomous data prioritization, the mission is poised to turn a distant, mysterious moon into a laboratory for the next generation of AI. As of February 2026, the transition into hardware integration marks the beginning of the end for the mission's development phase, moving it one step closer to its 2028 launch.

The significance of Dragonfly lies not just in the potential for scientific discovery on Titan, but in the validation of AI as a reliable pilot in the most extreme environments known to man. For the tech industry, it is a masterclass in edge computing and robust software design. In the coming weeks and months, all eyes will be on the APL integration labs as the "Iron Bird" begins its first simulated flights. These tests will determine if the AI "brain" of Dragonfly is truly ready to carry the torch of human curiosity into the outer solar system.

This content is intended for informational purposes only and represents analysis of current AI developments.

TokenRing AI delivers enterprise-grade solutions for multi-agent AI workflow orchestration, AI-powered development tools, and seamless remote collaboration platforms.

For more information, visit https://www.tokenring.ai/.