In a move that has sent shockwaves through the semiconductor and cloud computing industries, OpenAI has reportedly entered advanced negotiations with Amazon (NASDAQ: AMZN) for a landmark $10 billion "chips-for-equity" deal. This strategic pivot, finalized in early 2026, centers on OpenAI’s commitment to migrate a massive portion of its training and inference workloads to Amazon’s proprietary Trainium silicon. The deal effectively ends OpenAI’s exclusive reliance on NVIDIA (NASDAQ: NVDA) hardware and marks a significant cooling of its once-monolithic relationship with Microsoft (NASDAQ: MSFT).

The agreement is the cornerstone of OpenAI’s new "multi-vendor" infrastructure strategy, designed to insulate the AI giant from the supply chain bottlenecks and "NVIDIA tax" that have defined the last three years of the AI boom. By integrating Amazon’s next-generation Trainium 3 architecture into its core stack, OpenAI is not just diversifying its cloud providers—it is fundamentally rewriting the economics of large language model (LLM) development. This $10 billion investment is paired with a staggering $38 billion, seven-year cloud services agreement with Amazon Web Services (AWS), positioning Amazon as a primary engine for OpenAI’s future frontier models.

The Technical Leap: Trainium 3 and the NKI Breakthrough

At the heart of this transition is the Trainium 3 accelerator, unveiled by Amazon at the end of 2025. Built on a cutting-edge 3nm process node, Trainium 3 delivers a staggering 2.52 PFLOPs of FP8 compute performance, representing a more than twofold increase over its predecessor. More critically, the chip boasts a 4x improvement in energy efficiency, a vital metric as OpenAI’s power requirements begin to rival those of small nations. With 144GB of HBM3e memory and bandwidth reaching up to 9 TB/s via PCIe Gen 6, Trainium 3 is the first custom ASIC (Application-Specific Integrated Circuit) to credibly challenge NVIDIA’s Blackwell and upcoming Rubin architectures in high-end training performance.

The technical catalyst that made this migration possible is the Neuron Kernel Interface (NKI). Historically, AI labs were "locked in" to NVIDIA’s CUDA ecosystem because custom silicon lacked the software flexibility required for complex, evolving model architectures. NKI changes this by allowing OpenAI’s performance engineers to write custom kernels directly for the Trainium hardware. This level of low-level optimization is essential for "Project Strawberry"—OpenAI’s suite of reasoning-heavy models—which require highly efficient memory-to-compute ratios that standard GPUs struggle to maintain at scale.

Initial reactions from the AI research community have been one of cautious validation. Experts note that while NVIDIA remains the "gold standard" for raw flexibility and peak performance in frontier research, the specialized nature of Trainium 3 allows for a 40% better price-performance ratio for the high-volume inference tasks that power ChatGPT. By moving inference to Trainium, OpenAI can significantly lower its "cost-per-token," a move that is seen as essential for the company's long-term financial sustainability.

Reshaping the Cloud Wars: Amazon’s Ascent and Microsoft’s New Reality

This deal fundamentally alters the competitive landscape of the "Big Three" cloud providers. For years, Microsoft (NASDAQ: MSFT) enjoyed a privileged position as the exclusive cloud provider for OpenAI. However, in late 2025, Microsoft officially waived its "right of first refusal," signaling a transition to a more open, competitive relationship. While Microsoft remains a 27% shareholder in OpenAI, the AI lab is now spreading roughly $600 billion in compute commitments across Microsoft Azure, AWS, and Oracle (NYSE: ORCL) through 2030.

Amazon stands as the primary beneficiary of this shift. By securing OpenAI as an anchor tenant for Trainium 3, AWS has validated its custom silicon strategy in a way that Google’s (NASDAQ: GOOGL) TPU has yet to achieve with external partners. This move positions AWS not just as a provider of generic compute, but as a specialized AI foundry. For NVIDIA (NASDAQ: NVDA), the news is a sobering reminder that its largest customers are also becoming its most formidable competitors. While NVIDIA’s stock has shown resilience due to the sheer volume of global demand, the loss of total dominance over OpenAI’s hardware stack marks the beginning of the "de-NVIDIA-fication" of the AI industry.

Other AI startups are likely to follow OpenAI’s lead. The "roadmap for hardware sovereignty" established by this deal provides a blueprint for labs like Anthropic and Mistral to reduce their hardware overhead. As OpenAI migrates its workloads, the availability of Trainium instances on AWS is expected to surge, creating a more diverse and price-competitive market for AI compute that could lower the barrier to entry for smaller players.

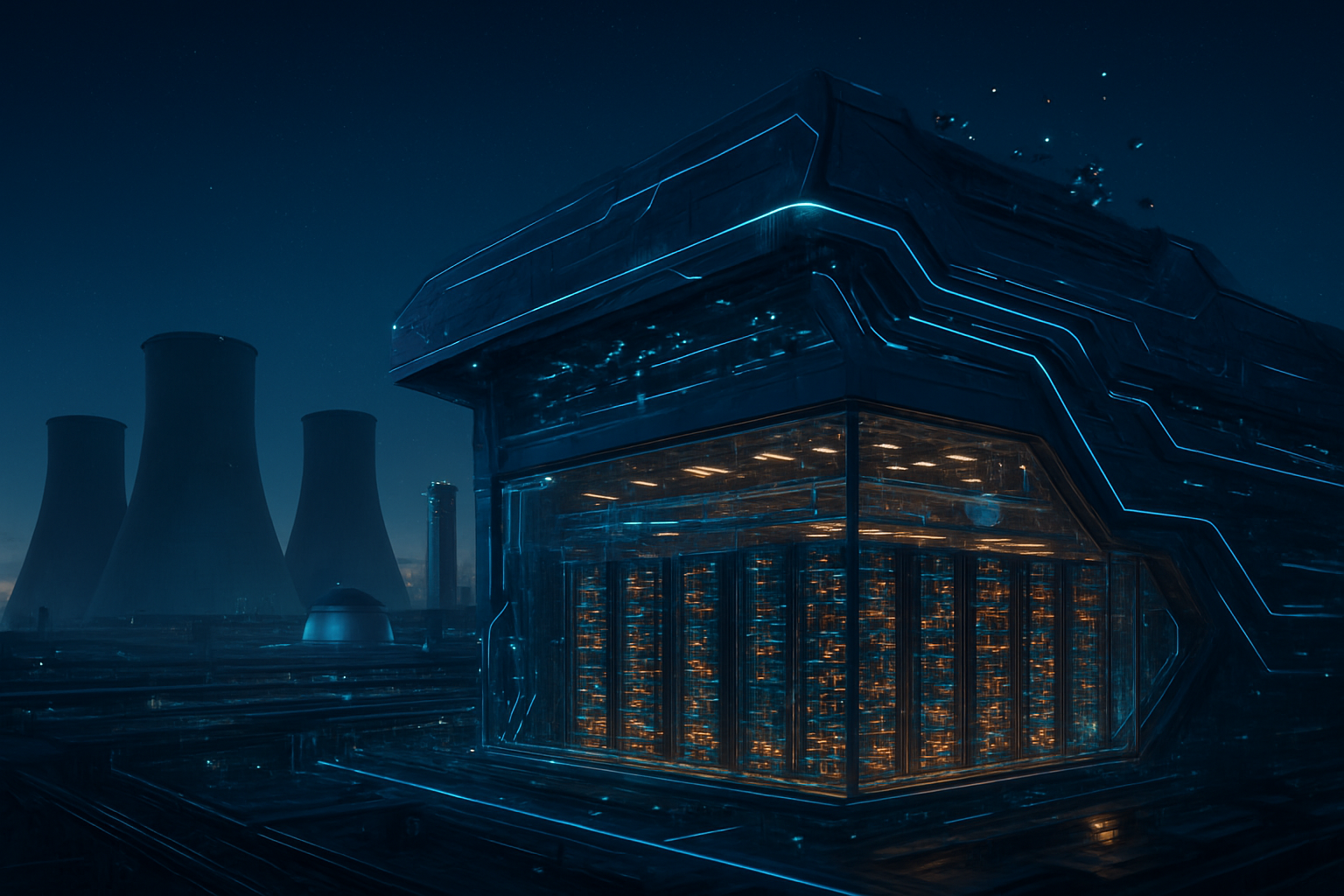

The Wider Significance: Hardware Sovereignty and the $1.4 Trillion Bill

The move toward custom silicon is a response to a looming economic crisis in the AI sector. With OpenAI facing a projected $1.4 trillion compute bill over the next decade, the "NVIDIA Tax"—the high margins commanded by general-purpose GPUs—has become an existential threat. By moving to Trainium 3 and co-developing its own proprietary "XPU" with Broadcom (NASDAQ: AVGO) and TSMC (NYSE: TSM), OpenAI is pursuing "hardware sovereignty." This is a strategic shift comparable to Apple’s transition to its own M-series chips, prioritizing vertical integration to optimize both performance and profit margins.

This development fits into a broader trend of "AI Nationalism" and infrastructure consolidation. As AI models become more integrated into the global economy, the control of the underlying silicon becomes a matter of national and corporate security. The shift away from a single hardware monoculture (CUDA/NVIDIA) toward a multi-polar hardware environment (Trainium, TPU, XPU) will likely lead to more specialized AI models that are "hardware-aware," designed from the ground up to run on specific architectures.

However, this transition is not without concerns. The fragmentation of the AI hardware landscape could lead to a "software tax," where developers must maintain multiple versions of their code for different chips. There are also questions about whether Amazon and OpenAI can maintain the pace of innovation required to keep up with NVIDIA’s annual release cycle. If Trainium 3 falls behind the next generation of NVIDIA’s Rubin chips, OpenAI could find itself locked into inferior hardware, potentially stalling its progress toward Artificial General Intelligence (AGI).

The Road Ahead: Proprietary XPUs and the Rubin Era

Looking forward, the Amazon deal is only the first phase of OpenAI’s silicon ambitions. The company is reportedly working on its own internal inference chip, codenamed "XPU," in partnership with Broadcom (NASDAQ: AVGO). While Trainium will handle the bulk of training and high-scale inference in the near term, the XPU is expected to ship in late 2026 or early 2027, focusing specifically on ultra-low-latency inference for real-time applications like voice and video synthesis.

In the near term, the industry will be watching the first "frontier" model trained entirely on Trainium 3. If OpenAI can demonstrate that its next-generation GPT-5 or "Orion" models perform identically or better on Amazon silicon compared to NVIDIA hardware, it will trigger a mass migration of enterprise AI workloads to AWS. Challenges remain, particularly in the scaling of "UltraServers"—clusters of 144 Trainium chips—which must maintain perfectly synchronized communication to train the world's largest models.

Experts predict that by 2027, the AI hardware market will be split into two distinct tiers: NVIDIA will remain the leader for "frontier training," where absolute performance is the only metric that matters, while custom ASICs like Trainium and OpenAI’s XPU will dominate the "inference economy." This bifurcation will allow for more sustainable growth in the AI sector, as the cost of running AI models begins to drop faster than the models themselves are growing.

Conclusion: A New Chapter in the AI Industrial Revolution

OpenAI’s $10 billion pivot to Amazon Trainium 3 is more than a simple vendor change; it is a declaration of independence. By diversifying its hardware stack and investing heavily in custom silicon, OpenAI is attempting to break the bottlenecks that have constrained AI development since the release of GPT-4. The significance of this move in AI history cannot be overstated—it marks the end of the GPU monoculture and the beginning of a specialized, vertically integrated AI industry.

The key takeaways for the coming months are clear: watch for the performance benchmarks of OpenAI models on AWS, the progress of the Broadcom-designed XPU, and NVIDIA’s strategic response to the erosion of its moat. As the "Silicon Divorce" between OpenAI and its singular reliance on NVIDIA and Microsoft matures, the entire tech industry will have to adapt to a world where the software and the silicon are once again inextricably linked.

This content is intended for informational purposes only and represents analysis of current AI developments.

TokenRing AI delivers enterprise-grade solutions for multi-agent AI workflow orchestration, AI-powered development tools, and seamless remote collaboration platforms.

For more information, visit https://www.tokenring.ai/.