In a move that has effectively redefined the boundaries of real-time artificial intelligence, Cerebras Systems has announced a record-shattering inference speed for Meta’s (NASDAQ:META) Llama 3.1 405B model. Achieving a sustained 969 tokens per second, the achievement marks the first time a frontier-scale model of this magnitude has operated at speeds that feel truly instantaneous to the human user.

The announcement, made during the Supercomputing 2024 (SC24) conference, signals a paradigm shift in how the industry views large language model (LLM) performance. By overcoming the "memory wall" that has long plagued traditional GPU architectures, Cerebras has demonstrated that even the most complex open-weights models can be deployed with the low latency required for high-stakes, real-time applications.

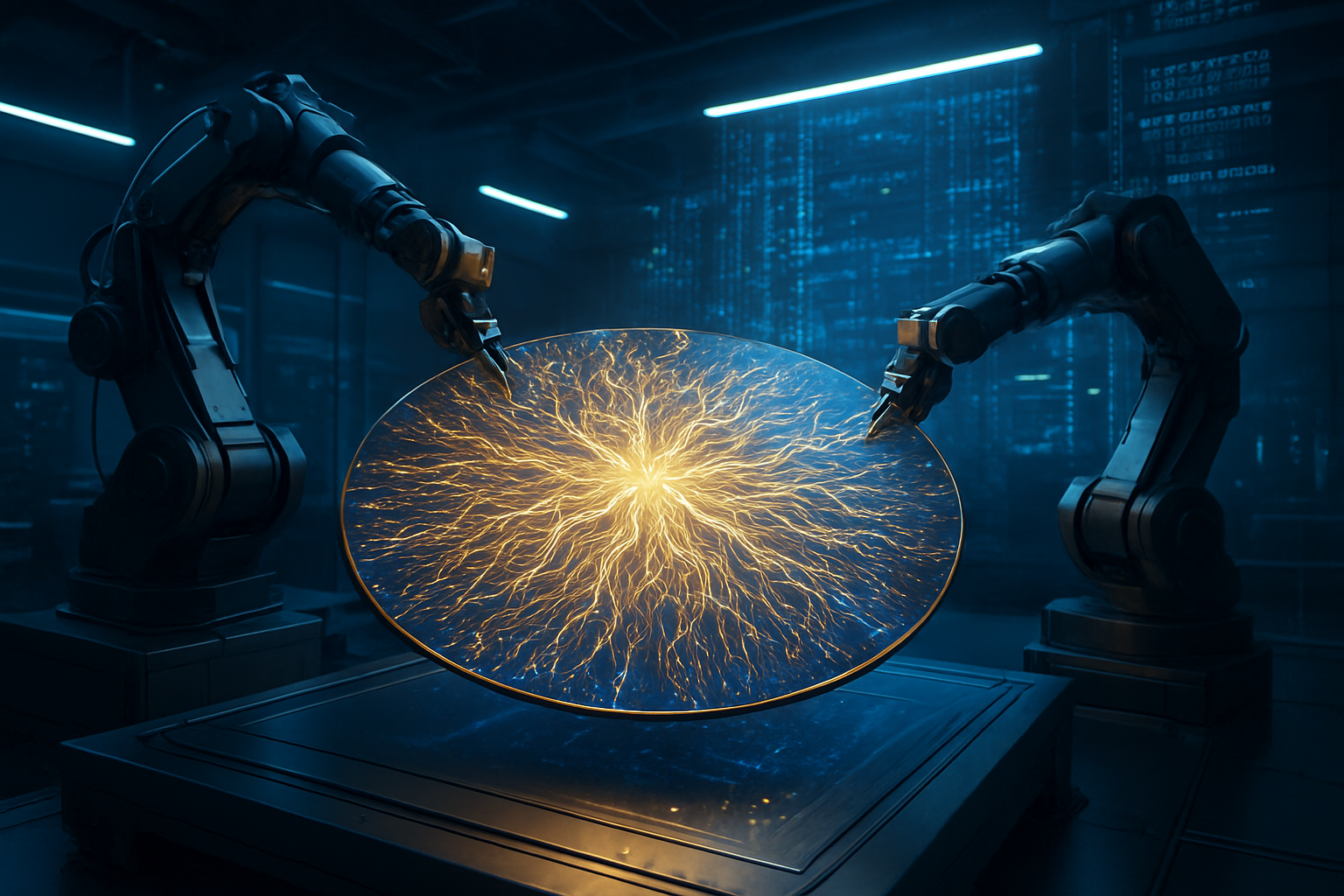

The Engineering Marvel: Inside the Wafer-Scale Engine 3

The backbone of this performance milestone is the Cerebras Wafer-Scale Engine 3 (WSE-3), a processor that defies traditional semiconductor design. While industry leaders like NVIDIA (NASDAQ:NVDA) rely on clusters of individual chips connected by high-speed links, the WSE-3 is a single, massive piece of silicon the size of a dinner plate. This "wafer-scale" approach allows Cerebras to house 4 trillion transistors and 900,000 AI-optimized cores on a single processor, providing a level of compute density that is physically impossible for standard chipsets to match.

Technically, the WSE-3’s greatest advantage lies in its memory architecture. Traditional GPUs, including the NVIDIA H100 and the newer Blackwell B200, are limited by the bandwidth of external High Bandwidth Memory (HBM). Cerebras bypasses this bottleneck by using 44GB of on-chip SRAM, which offers 21 petabytes per second of memory bandwidth—roughly 7,000 times faster than the H100. This allows the Llama 3.1 405B model weights to stay directly on the processor, eliminating the latency-heavy "trips" to external memory that slow down conventional AI clusters.

Initial reactions from the AI research community have been nothing short of transformative. Independent benchmarks from Artificial Analysis confirmed that Cerebras' inference speeds are up to 75 times faster than those offered by major hyperscalers such as Amazon (NASDAQ:AMZN), Microsoft (NASDAQ:MSFT), and Alphabet (NASDAQ:GOOGL). Experts have noted that while GPU-based clusters typically struggle to exceed 10 to 15 tokens per second for a 405B parameter model, Cerebras’ 969 tokens per second effectively moves the bottleneck from the hardware to the human's ability to read.

Disruption in the Datacenter: A New Competitive Landscape

This development poses a direct challenge to the dominance of NVIDIA (NASDAQ:NVDA) in the AI inference market. For years, the industry consensus was that while Cerebras was excellent for training, NVIDIA’s CUDA ecosystem and H100/H200 series were the gold standard for deployment. By offering Llama 3.1 405B at such extreme speeds and at a disruptive price point of $6.00 per million input tokens, Cerebras is positioning its "Cerebras Inference" service as a viable, more efficient alternative for enterprises that cannot afford the multi-second latencies of GPU clouds.

The strategic advantage for AI startups and labs is significant. Companies building "Agentic AI"—systems that must perform dozens of internal reasoning steps before providing a final answer—can now do so in seconds rather than minutes. This speed makes Llama 3.1 405B a formidable competitor to closed models like GPT-4o, as developers can now access "frontier" intelligence with "small model" responsiveness. This could lead to a migration of developers away from proprietary APIs toward open-weights models hosted on specialized inference hardware.

Furthermore, the pressure on cloud giants like Microsoft (NASDAQ:MSFT) and Alphabet (NASDAQ:GOOGL) to integrate or compete with wafer-scale technology is mounting. While these companies have invested billions in NVIDIA-based infrastructure, the sheer performance gap demonstrated by Cerebras may force a diversification of their AI hardware stacks. Startups like Groq and SambaNova, which also focus on high-speed inference, now find themselves in a high-stakes arms race where Cerebras has set a new, incredibly high bar for the industry's largest models.

The "Broadband Moment" for Artificial Intelligence

Cerebras CEO Andrew Feldman has characterized this breakthrough as the "broadband moment" for AI, comparing it to the transition from dial-up to high-speed internet. Just as broadband enabled video streaming and complex web applications that were previously impossible, sub-second inference for 400B+ parameter models enables a new class of "thinking" machines. This shift is expected to accelerate the transition from simple chatbots to sophisticated AI agents capable of real-time multi-step planning, coding, and complex decision-making.

The broader significance lies in the democratization of high-end AI. Previously, the "instantaneous" feel of AI was reserved for smaller, less capable models like Llama 3 8B or GPT-4o-mini. By making the world’s largest open-weights model feel just as fast, Cerebras is removing the trade-off between intelligence and speed. This has profound implications for fields like medical diagnostics, real-time financial fraud detection, and interactive education, where both high-level reasoning and immediate feedback are critical.

However, this leap forward also brings potential concerns regarding the energy density and cost of wafer-scale hardware. While the inference service is priced competitively, the underlying CS-3 systems are multi-million dollar investments. The industry will be watching closely to see if Cerebras can scale its physical infrastructure fast enough to meet the anticipated demand from enterprises eager to move away from the high-latency "waiting room" of current LLM interfaces.

The Road to WSE-4 and Beyond

Looking ahead, the trajectory for Cerebras suggests even more ambitious milestones. With the WSE-3 already pushing the limits of what a single wafer can do, speculation has turned toward the WSE-4 and the potential for even larger models. As Meta (NASDAQ:META) and other labs look toward 1-trillion-parameter models, the wafer-scale architecture may become the only viable way to serve such models with acceptable user experience latencies.

In the near term, expect to see an explosion of "Agentic" applications that leverage this speed. We are likely to see AI coding assistants that can refactor entire codebases in seconds or legal AI that can cross-reference thousands of documents in real-time. The challenge for Cerebras will be maintaining this performance as context windows continue to expand and as more users flock to their inference platform, testing the limits of their provisioned throughput.

A Landmark Achievement in AI History

Cerebras Systems’ achievement of 969 tokens per second on Llama 3.1 405B is more than just a benchmark; it is a fundamental shift in the AI hardware landscape. By proving that wafer-scale integration can solve the memory bottleneck, Cerebras has provided a blueprint for the future of AI inference. This milestone effectively ends the era where "large" necessarily meant "slow," opening the door for frontier-grade intelligence to be integrated into every aspect of real-time digital interaction.

As we move into 2026, the industry will be watching to see how NVIDIA (NASDAQ:NVDA) and other chipmakers respond to this architectural challenge. For now, Cerebras holds the crown for the world’s fastest inference, providing the "instant" intelligence that the next generation of AI applications demands. The "broadband moment" has arrived, and the way we interact with the world’s most powerful models will never be the same.

This content is intended for informational purposes only and represents analysis of current AI developments.

TokenRing AI delivers enterprise-grade solutions for multi-agent AI workflow orchestration, AI-powered development tools, and seamless remote collaboration platforms.

For more information, visit https://www.tokenring.ai/.